|

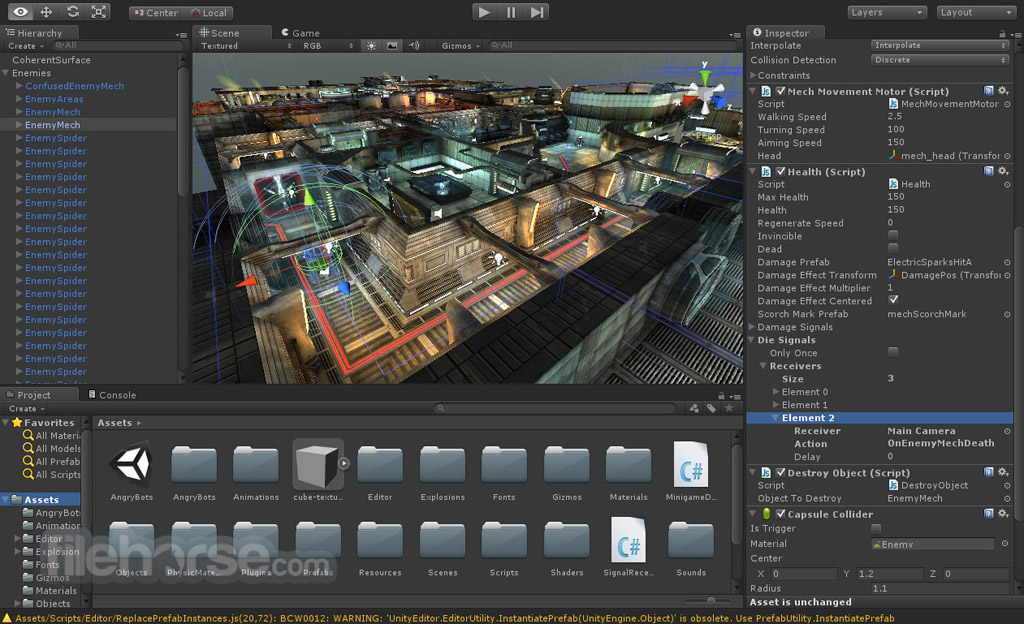

Using Arium, we implemented UnityPointerClick on screen-share button. Compare both the textures pixel by pixel.Convert the texture image as well as the screen shot to Texture2D.Get the Raw Image component of DisplayPlane.Validate that when user clicks on share-screen button, screen appearing on Displa圜anvas is same as user’s active screen. When the screen is not shared, that texture value is set to Null.ĭispla圜anvas > RawImage> Texture Test: So when screen is shared, we observe that a new texture is generated in the “RawImage” component of DisplayPlane. When the user clicks on share-screen button in main scene, user’s active screen is shared and all the other avatars present in that room can view that screen. In the DisplayPlane, there is a component called RawImage. In our 3D application, we have a gameobject called “Displa圜anvas” under which we have “DisplayPlane” gameobject. The approach might help in other applications as well. The test strategy shared in this blog can be applied to applications with similar implementation. We’ve considered an application where screen share is implemented using Agora Engine. So in this blog we will share an insight on our screen share testing journey in unity. With XR becoming a trend, there comes a need to test such features in our 3D applications as well.

Experiencing such features in a 3D application would be more realistic. Now think of a similar application but in 3D. These applications come with so many features like whiteboard sharing, screen sharing, chatting, remote access, annotations and what not. have become immensely popular in past three years due to the pandemic that shook the world to its core. Video conferencing apps like zoom, skype, google meet etc.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed